...

Working with the ETSI NFV TST 001 reference: http://www.etsi.org/deliver/etsi_gs/NFV-TST/001_099/001/01.01.01_60/gs_NFV-TST001v010101p.pdf

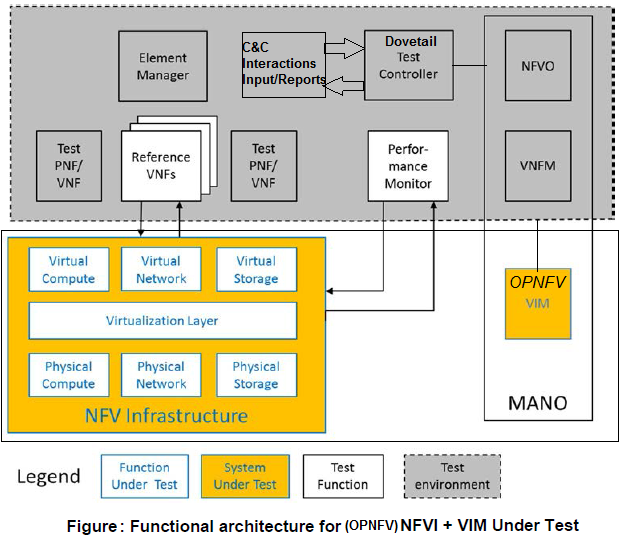

The Dovetail project will focus on tests validating a scope for a System Under Test (SUT) associated with Chapter 4.9 - NFV Infrastructure + VIM Under Test, as adapted for OPNFV (see figure below). The test suite will also contain pre-deployment validation tests to ensure that define preconditions and assumptions about the state of any platform under evaluation is first in a steady state of readiness. The test suite must not require access to OPNFV infrastructre or resources in order to pass.

Aside

Test case requirements

The following requirements should be fulfilled for all tests added to the Dovetail test suite from the very basic requirements we would have on a test case to be used in dovetail:

- Test cases should implement favour implementation of a published standard interface for validation

- Where a compliance test suite exists for components of the SUT, this test suite should generally be considered as a baseline for Dovetail testing

- Where no standard is available provide API support references

- If a standard exists and is not followed, an exemption method needs to be establishedis required

- The following things must be documented for the test case: Test cases be documented

- Use case specification

- Test preconditions

- Basic test flow execution descriptor

- Post conditions and pass fail criteria

- The following things may be documented for the test case:

- Parameter border test cases descriptions

- Fault/Error test case descriptions (this feels optional at this time)descriptions

- Test cases must pass on OPNFV reference deployments

- Tests must pass with deployments with at least two installersTests must pass with at least two deployment scenarios involving different SDN controllersnot require a specific NFVi platform composition or installation tool

- Tests must not require unmerged patches to the relevant upstream projects

- Tests must not depend on code which has not been accepted into the relevant upstream projects

- (hongbo: this needs to be discussed further. there are several SDN controllers. some test cases from dedicated SDN controller can not be used for other controller.)

- Test documentation/implementation file and directory structure (per supported framework)

- Test result storage, structure and test result information management (these should be able to be run publically or privately)

- require features or code which are out of scope for the latest release of the OPNFV project

New test case proposals should complete a Dovetail test case worksheet to ensure that all of these considerations are met before the test case is approved for inclusion in the Dovetail test suite.

Considerations for the Test Strategy Document

There is a draft test strategy document for OPNFV in preparation (owner: Chris Price).

- JIRA issue: https://jira.opnfv.org/projects/DOVETAIL/issues/DOVETAIL-352

- Gerrit review: https://gerrit.opnfv.org/gerrit/#/c/30811/

Dovetail Test Suite Structure

...

hongbo: the same as those defined in the phase 1 and phase 2

Annotated brainstorming/requirements proposals

Additional Requirements / Assumptions (not yet agreed by Dovetail Group)

These are additional requirements and assumptions that should be considered by the group. This agreement / issue is being tracked in JIRA, under DOVETAIL-352. As these are agreed, they should be moved above into the full list. Once the story is completed, this section can be deleted.

Assumptions

- Tests start from (use) an already installed / deployed OPNFV platform. OPNFV deployment/install testing is not a target of the program (that is for CI).

- DN: Dovetail should be able to test platforms which are not OPNFV scenarios - we have a requirement that OPNFV scenarios should be able to pass the test suite, which ensures that only features in scope for OPNFV can be included

Requirements

- All test cases must be fully documented, in a common format, clearly identifying the test procedure and expected results / metrics to determine a “pass” or “fail” result for the test case.

- DN: We currently list a set of things which must be documented for test cases - is this insufficient, in combination with the test strategy document?

- lylavoie - No, we need to have the actual list of what things are tested in Dovetail, and how those things are tested. Otherwise, how to can we even begin to know if the tool test the things we think it does (i.e. validate the tool).

- DN: I agree, but the scope of the test suite is a topic for the test strategy document, and not part of the decision criteria for a specific, individual test. Test case purpose and pass/fail criteria are part of the documentation requirements.

- DN: We currently list a set of things which must be documented for test cases - is this insufficient, in combination with the test strategy document?

- Tests and tool must support / run on both vanilla OPNFV and commercial OPNFV based solution (i.e. the tests and tool can not use interfaces or hooks that are internal to OPNFV, i.e. something during deployment / install / etc.).

- DN: Again, there is already a requirement thsat tests pass on reference OPNFV deployment scenarios

- lylavoie: Yes, but it can not do that by requiring access to something "under the hood," this might be obvious, but it's an important requirement for Dovetail developers to know.

- DN: Good point. We do have that test cases must use public standard interfaces and APIs.

- DN: Again, there is already a requirement thsat tests pass on reference OPNFV deployment scenarios

- Tests and tool must run independent of installer (Apex, Joid, Compass) and architecture (Intel / ARM).

- DN: This is already in the requirements: "Tests must not require a specific NFVi platform composition or installation tool"

- Tests and tool must run independent of specific OPNFV components, allowing different components to “swap in”. An example would be using a different storage than Ceph.

- DN: This is also covered by the above test requirement

- Tool / Tests must be validated for purpose, beyond running on the platform (this may require each test to be run with both an expected positive and negative outcome, to validate the test/tool for that case).

- DN: I do not understand what this proposal refers to

- lylavoie - The tool and program need must be validated. For example, if a test case purpose is to verify a specific API is implemented or functions is a specific way, we need to verify the test tool does actually test that API/function. Put differently, we need to check the test tool doesn't false pass or false fail devices. This is far beyond just a normal CI type test (i.e. did it compile and pass some unit tests).

- DN: This is part of the test strategy document rather than part of the requirements for a specific test

- DN: I do not understand what this proposal refers to

- Tests should focus on functionality and not performance.

- Performance test output could be built in as “for information only,” but must not carry pass/fail metrics.

- DN: This is covered in the CVP already

Additional brainstorming