The CNTT Reference Model specification contains some information and requirements where VSPERF (and other projects) need to respond and enhance their current capabilities to address the implied testing more fully.

For example, the current (9/11) reference model master .MD has:

UPDATE: Oct 2, 2019 Note that Table 4-4 will likely move to Chapter 8 of the Reference Model, because it includes Benchmarks that are externally measured. This is certainly within the spirit of "exposed" metrics, but it seems that the contributors really want measurements to monitor the performance exposed to VNFs. However, each of the above metrics will need to be replaced by their passively observable counterparts, and many of these metrics will come from ETSI NFV TST008.

Per VNF-C, per vNIC, and per vCPU have implications on VSPERF testing features, and may require new methods in TST 009

For example, the Test Setups in TST 009 begin to address the needed Per-V* testing, in Section 6.2,

where the Multiple PVP configuration can be used to assess performance of 1, 2, 4, 8, 12, 16, parallel VMs (or Pods), and a characteristic curve can be provided using the same resources and TST001 workload profile as the VNF of interest (if this specialization is needed). This setup assumes that the Benchmarking Goals and Use cases (Section 6.1 of TST009) require pairs of ports and bidirectional testing. Single port unidirectional testing is also possible with Multiple PVP.

The Test Device/Traffic Generator needs to generate flows designed to pass through the vSwitch and reach the VM, where the processing needed to return the flows takes place.

vNICs themselves can be monitored for octets transferred, and ultimately the limiting bottleneck of each multiple parallel VM setup should be determined.

Below are some considerations and questions, where "the present document" refers to TST009:

Intern Project: Container Networking Testing and Benchmarking

VSPERF-Container Networking Benchmarking and Testing

Understanding the performance of containerized applications under various scenarios is critical for cloud native migration. One option is a black-box approach for profiling various (compute/network intensive) containerized applications under different CPU and Memory constraints. Profiling, refers to study how sensitive the container is to the shared resource availability. The approach is black-box because it does not rely on any specific adaptions or any agents within containers. The main components of this approach are Resource Limiter, Metrics collection, Data Analytics, and Traffic/Load-Gens. For every combinations of CPU and Memory, which are varied in pre-defined steps (10%, 25%, 50%, 75%, 90%), the sensitivity of the application container to this each combination is calculated. A model will be built to categorize the sensitivity of the application.

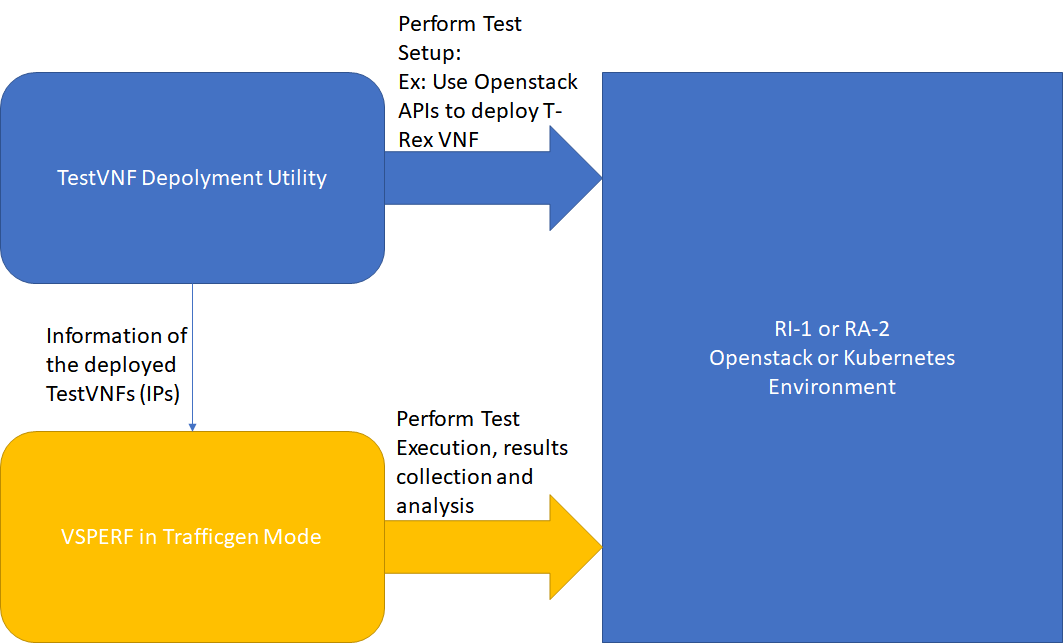

Following the September 2019 CNTT workshop, it seemed agreeable to the OPNFV test project participants to conduct some tests on a surrogate for "reference implementation" using the tools we have, and what we can easily enhance with the CNTT Reference Model evaluations in-mind. The main goal is to look for GAPS between the Reference Model Requirements and the Test Tool Capabilities, decide the disposition/resolution of the Gaps (work to add test capability or de-scope some requirements if enough info is available). There is no schedule yet to perform this testing, but there do seem to resources coming available, and it seemed agreeable that the next meet-up would be a Hackfest for the OPNFV testing community.