| Name | ML Category |

|---|---|

| Jahanvi | Supervised |

| Akanksha | Unsupervised |

| Kanak Raj | Reinforced |

| Name | Comments on Applicability | Reference |

|---|---|---|

| Name | Comments on Applicability | Reference |

|---|---|---|

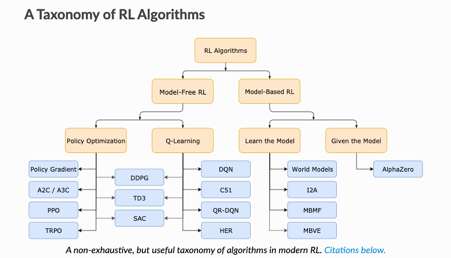

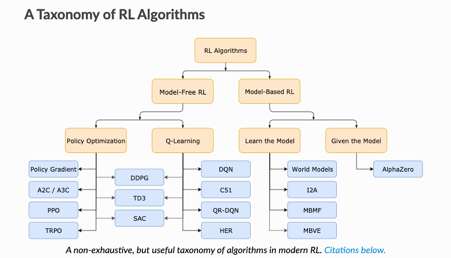

Model-Free vs Model-Based RL

Whether the agent has access to (or learns) a model of the environment(a function that predicts state transitions and rewards)

Model Free | Model-Based |

forego the potential gains in sample efficiency from using a model | Allows to plan ahead and look in possible results for a range of possible choices. |

easier to implement and tune. | Ground Truth Model for any task is generally not available. |

If agents want to use a model then it has to prepare it purely from experience | |

fundamentally hard | |

being willing to throw lots of time | |

High computation | |

Can fail off due to over-exploitation of bias |

What to Learn in Model-Free RL

Q-Learning

Policy Optimization | Q-Learning |

optimize the parameters either directly by gradient ascent on the performance objective or indirectly, by maximizing local approximations | learn an approximator for the optimal action-value function |

performed on-policy, each update only uses data collected while acting according to the most recent version of the policy | performed off-policy, each update can use data collected at any point during training |

directly optimize for the thing you want | indirectly optimize for agent performance |

More stable | tends to be less stable |

advantage of being substantially more sample efficient when they do work, because they can reuse data more effectively | Less sample efficient and takes longer to learn as learning data is limited at every iteration. |

| Name | Comments on Applicability | Reference |

|---|---|---|

Q Learning | ||